From Share of Search to Share of Answer: The New Digital Market Share

For the past two decades, executive leadership has relied on a simple, effective proxy for digital market dominance: Share of Search. This metric, measuring a brand’s visibility relative to competitors, governed multi-million dollar SEO budgets and shaped digital strategy.

In January 2026, this paradigm is no longer sufficient.

The foundational shift in how information is discovered—driven by the integration of generative AI into search engines—has rendered Share of Search a trailing indicator of a bygone era. Our analysis indicates that a new, more unforgiving metric has emerged as the definitive measure of digital market share.

We call it Share of Answer.

If your brand is not consciously and systematically building its presence within this new framework, it is on a path to strategic invisibility.

What is “Share of Answer”?

Share of Answer is the percentage of times a brand, its data, or its intellectual property is presented as the primary, cited source in an AI-generated response to a high-value user query.

It is a direct measure of a brand’s authority and relevance not just to a search engine’s index, but to the AI model’s foundational knowledge base.

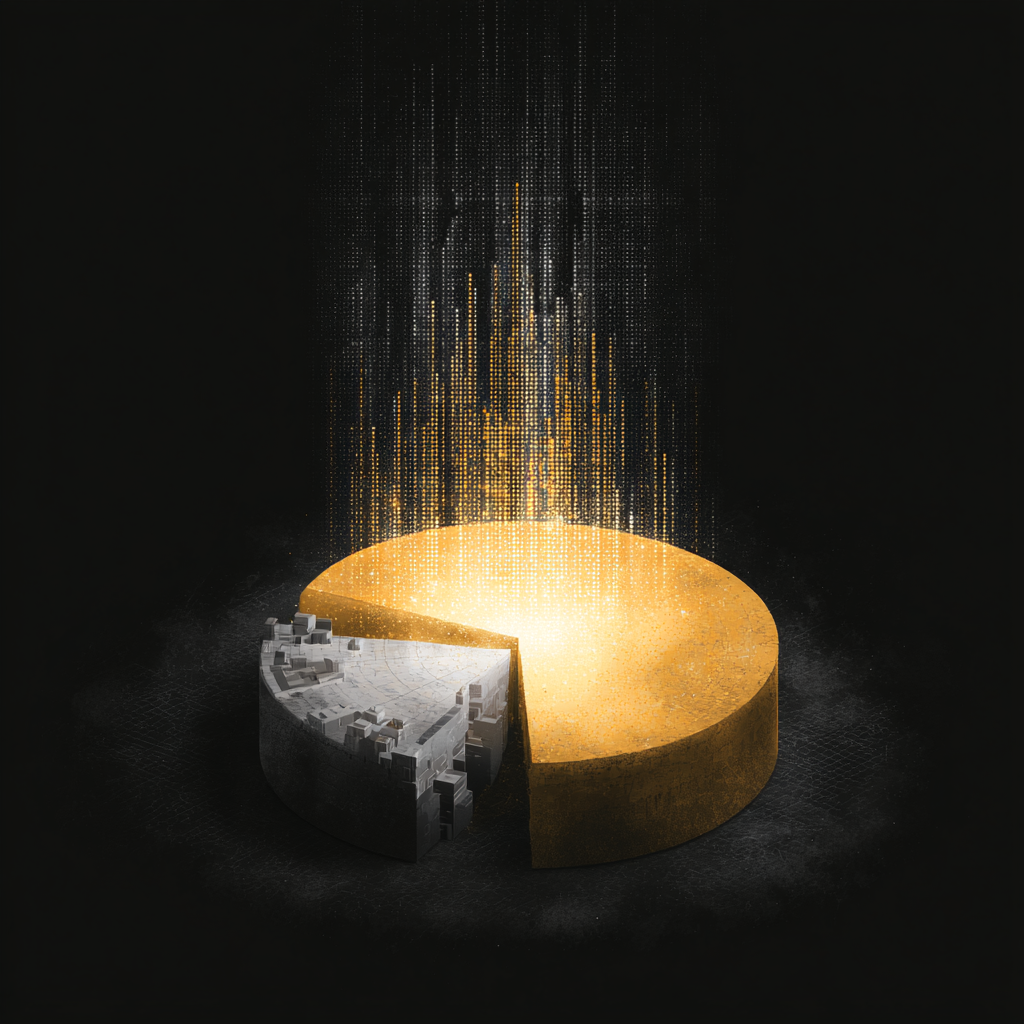

The “Winner-Takes-All” Shift

Google’s classic “10 blue links” model was a forgiving landscape. Securing position https://www.google.com/search?q=%233 still offered significant traffic. The user synthesized the answer from multiple sources.

AI-driven search engines—from Perplexity to Gemini—operate on a fundamentally different principle. They deliver a synthesized, definitive conclusion, citing only one or two primary sources.

The rest? They are invisible. There is no second page. There is only the answer and the sources that powered it. A brand’s Share of Answer is therefore either substantial or it is zero.

Why Legacy SEO Fails to Capture Share of Answer

Legacy SEO is built to win on a human-scanned page, prioritizing keywords and backlinks. AI search requires machine-readable, entity-based knowledge.

From Keywords to Entities

Traditional SEO targets strings of text (keywords). AI models operate on entities and their relationships.

-

SEO: Targets “best wealth management platform.”

-

AI: Understands “wealth management platform” and “high net worth individual” as interconnected nodes in a knowledge graph.

To be the source, your content must define and relate these entities more clearly than anyone else. A simple blog post is no longer sufficient.

The Inadequacy of Domain Authority

For years, Domain Authority (DA) was the central pillar of strategy. AI models interpret authority with more nuance, prioritizing verifiability and specificity.

A specialized SaaS company with a meticulously structured knowledge hub can be chosen as the primary source over a high-DA competitor like Forbes. The AI prefers the specific, machine-readable source over the generalist one.

The Mechanics of AI Visibility Optimization (AVO)

AI Visibility Optimization (AVO) is the strategic discipline of structuring a company’s expertise to become the preferred, citable source for AI answers. It is distinct from SEO.

Achieving a high Share of Answer requires a systematic approach built on three pillars.

Pillar 1: The Knowledge Hub Architecture

A brand’s website must evolve into a structured Knowledge Hub—a centralized repository of domain expertise built for machines first.

-

Entity-Centric Structure: Organized around core entities (e.g., “Roth IRA”), not keywords.

-

Topic Clusters: Core entity pages supported by granular sub-topic pages.

-

Disambiguation: Clear definitions ensuring the AI understands the precise meaning of a concept in your context.

Pillar 2: Content Atomization and Structuring

AI models do not “read” articles; they parse them for discrete information. Content atomization breaks down complex topics into their smallest logical components.

-

Instead of a 2,000-word guide, create interconnected nodes answering specific questions (“What is a Policy Enforcement Point?”).

-

Wrap these units in structured data (Schema.org). This is the technical equivalent of pre-digesting content for the AI.

Pillar 3: Verifiability and Source Citation

Building trust with an AI is a technical exercise.

-

Cite Primary Sources: Link to research and government stats to allow the AI to follow the chain of evidence.

-

Surface E-E-A-T Signals: Use author bylines linked to detailed credential profiles.

-

Provide Machine-Readable Data: Present clinical or financial data in structured tables, not just prose.

Measuring and Strategizing for Share of Answer

Measuring Share of Answer requires programmatically querying AI models to track citation frequency versus competitors. A strategic framework for 2026 should include:

-

Identify “Answer Territories”: Map the critical user questions where your company must be the definitive answer.

-

Conduct an AVO Audit: Analyze content for machine-readability and entity coverage.

-

Develop the Knowledge Hub Roadmap: Invest in architecting a centralized hub. This is infrastructure, not a campaign.

-

Measure and Iterate: Monitor your Share of Answer and refine structuring tactics based on model behavior.

The competitive moats of the next decade are being dug now. They are being constructed with clean, structured, authoritative information. The companies that become the definitive answer in their domain will consolidate market share in a way we haven’t seen since the dawn of the internet.